AI at Oberlin: An Interview with Pat Day

by CG

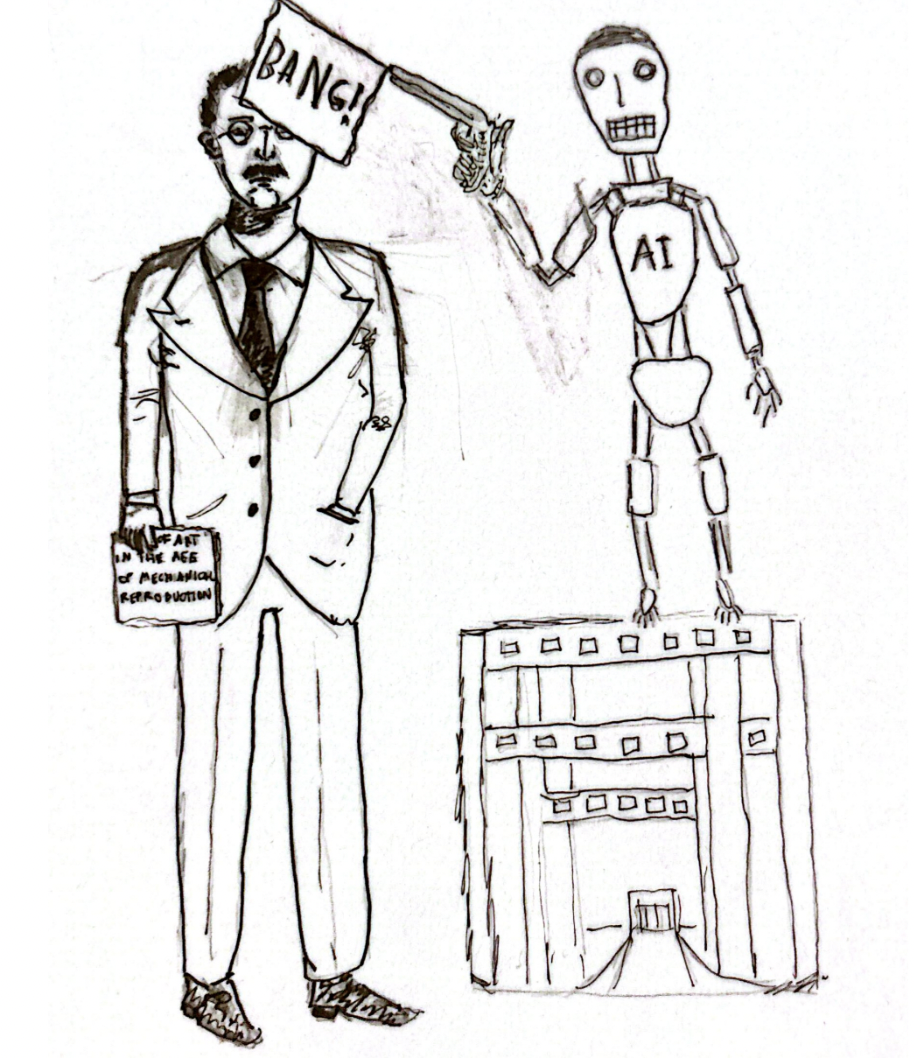

Illustration by Rishi and Angus, Contributors

CG: When did you first become aware of generative AI ? What was your initial impression of this technology?

Pat Day: I started to hear about [generative AI] five or six years ago, and initially, I was really skeptical about the idea that it was “generative” because, in fact, it's probabilistic. The word “generative” implies a kind of holistic understanding, and it doesn't have that. So I felt like we could pretty much ignore it because it seemed like it was just a giant version of a spell checker, essentially. I mean, the notion of scraping the net to create this giant database with tags that allowed it to do so seemed overwhelmingly clunky. And it was! I mean, it was sort of easy to make fun of the early versions of it. But regardless, it's quite clear that none of these things were actually designed for education. I mean, Sam Altman has no conception of what education is, and neither does Bill Gates. This is where it starts to become something other than a day-to-day tool. I mean, Gates says “computers will replace teachers, the more computers we have, the fewer teachers we’ll need.” And so it does seem, particularly for the humanities, that [this technology] is a real challenge to what it is we do. I mean, what do we use it for? We use computers to write essays and to do research and we can't think of what parts of that would be done better by AI. But this has come upon us very strongly. I mean, when Chat GPT was first released, it really sent panic through the college. The iPhone was basically a device that naturalized being intimately involved with your computer, and everybody accepted that. But then, when it actually starts to come after people’s jobs, that becomes a different kind of thing for us to think about, both in a “I need to be employed” way, but also in a “I think this job is important and I don't think AI will do a good job at it” way.

CG: The use of AI seems to be a contentious issue at Oberlin. On the one hand, the Honor Code explicitly forbids the use of generative AI unless otherwise specified by a faculty member or the ODA; on the other hand, Oberlin is currently embarking on a so-called “Year of AI Exploration,” and is considering creating an AI minor. How are faculty members and administrators feeling about Oberlin’s current AI policies? What policies regarding AI do you implement in your classes?

PD: The year of AI exploration was approved by an elected faculty committee, but [information about the decision] didn't come until the beginning of the semester, and that sort of disturbed a lot of people, because the rollout didn't work. I mean what exactly does it mean to be an AI exploring institution? The tension between having AI and having rules that seem to say “we don't want it, except in extraordinary circumstances,” hasn’t been reconciled. But there was the idea that you couldn't deny the existence of AI, but you also couldn't force faculty to use it, or allow it to be used. So we live in this kind of ambiguous state, because we just haven't figured out what's going on. It is something we have to think about, but some faculty members are more inclined to say “we have to come to terms with this, and we shouldn’t reject the notion that we can find ways of using this.” Others are both enraged and terrified. For a lot of people in the humanities, it's sort of like the betrayal of 2,500 years of civilization [laughs]. And for others, it's just like “this is just not a machine that we should use, we shouldn't do this because it doesn't make any sense.” I think I tend to fall into that category. I don't see… I mean, it's not a database, it doesn't search databases, it doesn't understand an article if you ask it to read it, its summaries miss the entire point of the long-form written essay. I mean, I just don't know what we would do with it. It's sort of being advertised and presented and talked about as if it is basically a White Witch, a friendly wizard that will do all things for you. And, when you're teaching, you don't want students to have machines doing things for them! It just doesn't make any sense.

So, my sense of it is that our job right now—and this will change—but I think right now we are in a situation where [students] who are using [AI] at Oberlin College actually have experienced the same shock as the faculty. This is not something people grew up with. And we don't know how far it's spreading into high schools and junior high schools at this point, so at this moment our job is to do a lot of demystifying, to get rid of the “friendly wizard” idea. This is a situation in which telling people more facts about what this technology is important. We need to stress the notion that it is a product, not a friendly wizard or a tool. And that is a really central point because the idea behind AI is that it will play the central organizing role in the economy. Right now, AI research is what's keeping the American economy afloat, and the people who [are involved with] AI are, to a great degree, connected with right wing, MAGA politics. People have to remember that Peter Thiel is the guy who got JD Vance where he is now. So, right now we have to present this real information [to students]. I think that we need to make sure, particularly at the 100 and 200 level, that students are taught about writing and conducting research the “old school” way, because they need to know what the “old school” way was. If they go ahead and use AI and they don't know [those strategies], they don't know what they might have missed.

CG: To return briefly to the topic of a potential AI minor, I have some questions regarding what this minor would entail. To the best of your knowledge, what classes would count towards an AI minor? What would the purpose of this minor be? Are your colleagues enthusiastic about the creation of this minor?

PD: I think it's a very interesting thing for Oberlin to try to generate something out of [a product] that has no real academic base right now. I mean we have the computer science department, but this “critical AI minor” is not that. [The minor would actually be oriented around] talking about the kinds of things I've been talking about here, and it's really striking that we want to build something that is not a version of something that's being done elsewhere. Like we have a journalism minor, but there are journalism programs all over the world, so it's already established. This is not established. This is actually an attempt to create something with the resources we have now that will produce a useful and coherent address to a current problem. It is a very rare thing in academia to move this fast. And one of the reasons we have a minor is because everything in academia is organized around the notion of disciplinarity, and that allows not only for the siloed discipline, but it also allows for the idea of hybrid disciplines where things can come together. But usually the run up time to saying, “we should have programs in this, and programs in that,” is much longer, and this [minor] seems to be being created in a highly politicized context. Nevertheless, it's an attempt to employ the college’s existing resources in an investigative way [so we can] understand what's going on with this technology. There is the idea that you need to understand the machine, the tool, but most of the work that's being done with this [minor focuses on] thinking about, “okay, what does this thing mean at this moment, and how do we understand what's going into it?” while also thinking about the issues with labor, the environment, its existence in education, and its implications for human culture and society. I still remain slightly skeptical, but I've kept trying to think, “what would we do otherwise in order to give [the subject] some shape and some organization?”

CG: In my experience as both a student and a Course Writing Associate, most Oberlin students that I know and work with seem to loathe AI. Meanwhile, I feel that some of the professors that I have had are far more amenable to pro-AI arguments, and are fairly open to allowing students to use the technology for certain assignments, albeit in a limited capacity. There seems to be this sentiment shared by some Gen-Xers and Baby Boomers that’s like “well, this is just the latest technological innovation, and using it is comparable to using a laptop or a smart phone,” or what have you. Obviously, I have also had many professors who are as adamantly anti-AI as their students, especially in the English department, but this is definitely still a trend that I have noticed. Have you had a problem with students using generative AI in your classes, or would you say that your students typically oppose the use of AI? Do you feel that your colleagues are as opposed to AI as your students? And if not, what do you think may account for this generational gap?

PD: At this point, I have no anxieties about AI taking over education. The students that I work with, I mean, I think they find it insulting to suggest that they would use Chat GPT, because a lot of students in the humanities are proud of how well they write, and they don't need a machine to do it for them. With faculty, part of our job, even though we may not like to think about it this way, is to adapt. I mean, the goal is to keep education working. So some [professors’] speculative ideas along the lines of, “well, maybe there's something productive we can do with it,” I understand. Personally, I'm not anxious to do that, because I really don't see what aspects of the writing process or the research process will be enhanced by this at this point.

And, I mean the notion that it will ever become a genuine entity seems very, very remote to me, and very far off. In 1955, von Neumann thought it would take eight years [to develop artificial intelligence]. In 1970, Marvin Minsky thought it would take five years. I mean, Turing thought it was coming right after World War II. Nobody knows what human intelligence is anyway. Nobody knows what intelligence is! So I think the idea that we need to be ready to see what happens, as long as we're cautious, I can understand that. And there's always been a range of people who want to use more tech than others. I think overall, in the humanities, the skepticism and outrage is pretty intense, certainly in the English department. I mean, there's some moderate, “well, let's wait and see,” types, but there's no AI maniacs.

CG: Over the course of your time as an educator, you have watched the ways in computers, the rise of the internet, smartphones and other types of tech have fundamentally changed the way in which students and professors navigate academic spaces. Do you have any final thoughts on the future of AI, and the ways in which it may impact higher education?

PD: Well, if it does have a major impact on education it will certainly bring Modernity as we know it into a completely different phase. It will make manifest the notion of computation and calculation, the notion of freestanding information and information gathering, and the idea of the automatization of activity. I mean, all of these things have been the big tensions of the modern world, and they were right from the beginning, from when La Mettrie wrote “Man a Machine,” in the 18th century, and really from Descartes onward. AI will either create a new rupture between the culture as it exists and a new culture, in the way that “the modern” did with what we now call “traditional cultures.” Or maybe it turns into utopia; who knows? I mean, I strongly doubt that [laughs]. Scottish writer Iain Banks wrote about this. He wrote science fiction novels, and he wrote other kinds of novels as well; he was one of the great Scottish Postmodernists, and in the Culture series, he created this world with this one civilization where they had a completely workable AI system which had created paradise. Most people in this society spent their time exploring things and living their lives and all the rest, and a small number of people who liked to work went out into the larger part of the galaxy in order to make sure that they controlled the crazy lunatics out there who were not smart enough to create these machines that would give them leisure. And I mean, Banks was a socialist, so he imagined this as a socialist economy. And the “tech boys” love it because they think it's about the domination of tech lords. They don't understand that this is a socialist society! So there's this sort of weird sense that nobody's understanding of this [technology] is very clear or coherent at this point. I'm interested in this partly because, yes, I watch Star Trek, but I understand it's fiction, as opposed to some of these guys who think, “no, it's prophecy.” [laughs].